“What’s Open AI? Is that good?”

Tech people assume AI adoption is everywhere. It isn't. Here's why that matters.

Earlier this week, while I was out on my morning walk by the Sausalito Houseboats with my dog Peanut, my mom called to check-in. She was driving back from the Catholic high school outside of Philadelphia where she teaches health + PE and wanted to chit chat.

“So tell me what’s new???” she asked in her bubbly, cheerful, thick South Jersey accent. My fiancé has been knee deep in interviews for the last two weeks, and just found out that morning he had an offer from Open AI — a big accomplishment for anyone, but especially for an immigrant kid who grew up poor in communist Russia, and then moved to the US at 12 not speaking any English. I’m so proud of him! The moment was completely lost on my mom. Here’s how the conversation went after I told her “the news”:

Mom: “What’s Open AI? Is that good?!”

Me: “Mom, you don’t know what Open AI is!?!… It’s like one of the most famous companies in the world right now. It’s ChatGPT… They made ChatGPT!!”

Mom: “Oooohh… Chat… G P T..?” Chat—”

Me: “CHAT GPT!!! Mom!!!”

Mom: “Oh well you have to show me how to use that again when you come home!”

Me: “Mom… OMG…”

If you’ve been feeling frazzled and fried trying to keep pace with an accelerating explosion of agentic AI, give Peggy Robinson a quick call. She’ll bring your pulse down.

My mom has never found tech intuitive.

My mom once walked into a Sprint store in full Karen mode looking like Shrek with her brand new AirPods in backwards convinced they were broken.

One of my most vivid childhood memories was the day she couldn’t figure out the “double-click” on the family computer’s mouse. She’s in the over 60 crowd and — like many of our parents — can barely use her iPhone.

As hilarious, charming and technologically challenged my boomer mom can be without realizing it, she’s not a total outlier when it comes to AI awareness and adoption. In fact, I’d argue people like my mom are the canary in a coal mine.

A few weeks ago I went to my friend’s bio-tech startup’s1 opening day ribbon cutting ceremony. As part of the celebration, they were offering tours of their lab where they naturally engineer microbes to produce more sustainable fertilizer.

On the tour, I got to chat with a group of female Stanford Biotech PhD candidates. As the only non-biotech-scientist of the group, as we suited up in our white coats and goggles, I went for the lowest hanging small talk fruit — “So how are you guys using AI in your research?”. The consensus across the group: they weren’t really using it at all. None of them were paying for premium model access. At most, they’d dabbled with ChatGPT’s free mode to summarize some academic papers here and there. One woman noted that it’s not really talked about much at their lab on campus. On Stanford’s campus.

Now that conversation surprised me. And I bet it mildly surprises you too.

And it got me thinking… if you live and work in San Francisco, it’s very easy to become Bubble Blind.2

Here’s why that is a problem:

Naturally, San Francisco has become the heartbeat of the global AI Industry. Every significant AI company has an HQ in SF. That means, a significant amount of the people building this technology, live here.

AI companies built by people inside the bubble, design for people inside the bubble. If you’ve never talked to a high school teacher who doesn’t know what Open AI is, you probably aren’t building tools she could actually use. If AI builders aren’t intentional about stepping outside of their bubbles, these humanity altering tools stay inaccessible to the people who most need the productivity gains —ie. the majority of people.

It’s the dogfood paradox.3

We were guilty of it at Slack. At Slack, we loooved talking about dogfood. We did everything in Slack. In 8.5 years at Slack, I don’t think I sent a single work-related email. We took pride in our unique ability to empathize with our users, and often assumed we could intuitively build for their needs because we were all using the same product every day. We trusted our dogfooding process. It was our secret sauce.

Most of the time, dogfooding is a great tool for developing empathy with your customers. When your problems are the same as your users, it’s easy to intuit product direction. But that same intuition can become misleading over time if everyone making decisions is effectively a power user.

For example, Slack always used Slack in a very Slacky-y way. I was often humbled when I’d see how differently friends at other companies — some of whom were our biggest customers — were using the product. Public channels were supposed to replace internal emails. Slack is actually an acronym that stands for “Searchable Log of All Collective Knowledge”. In our Slack world, gone were the days of new-hires losing access to the contextual conversations of their predecessors who’d moved on to new jobs. In reality, most of our customers were still using email for all or most internal communication. The majority of their Slack chats were private DMs. So much for the collectively searchable log of knowledge.

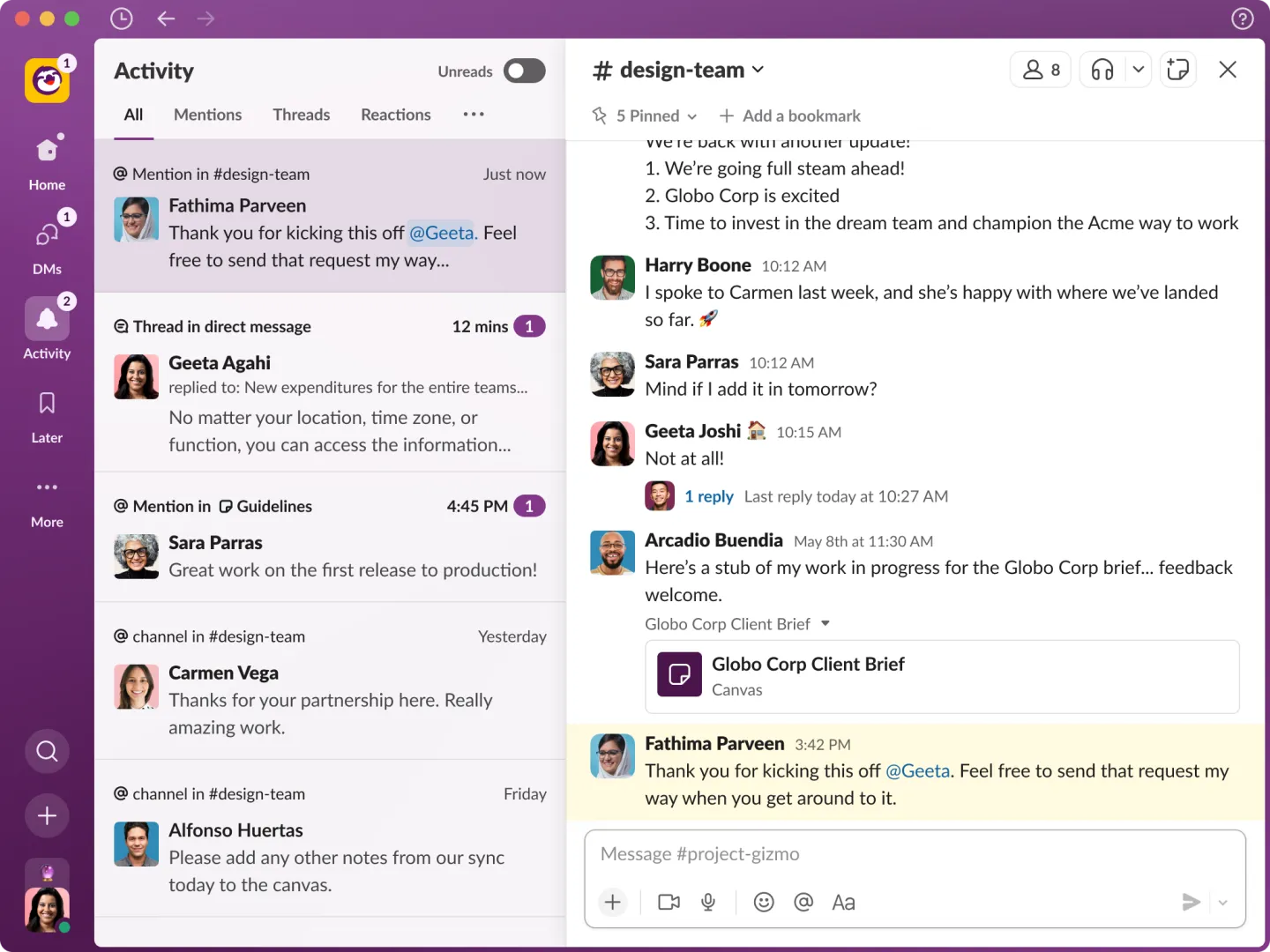

The blind spot grew in lock step with the company. Over the last few years I was there, Slack prioritized roadmap features that optimized for power users with the hope that more teams would start to use Slack for everything the way we did at Slack. Lists aimed to replace Airtable. Canvases were the new Google Docs. Slack, the eventual Single Source of Truth. To us, it made sense. We got enormous value out of Slack and were driven to help our customers do the same.

But something shifted when our ~3k person company merged into a ~100k person company. Slack’s needs started to look very different than the majority of our customers operating small and mid-sized companies. Some of our biggest redesigns are a good example.

The value of this redesign seemed obvious to everyone working at Slack. We needed a better way of managing our own firehose of information.

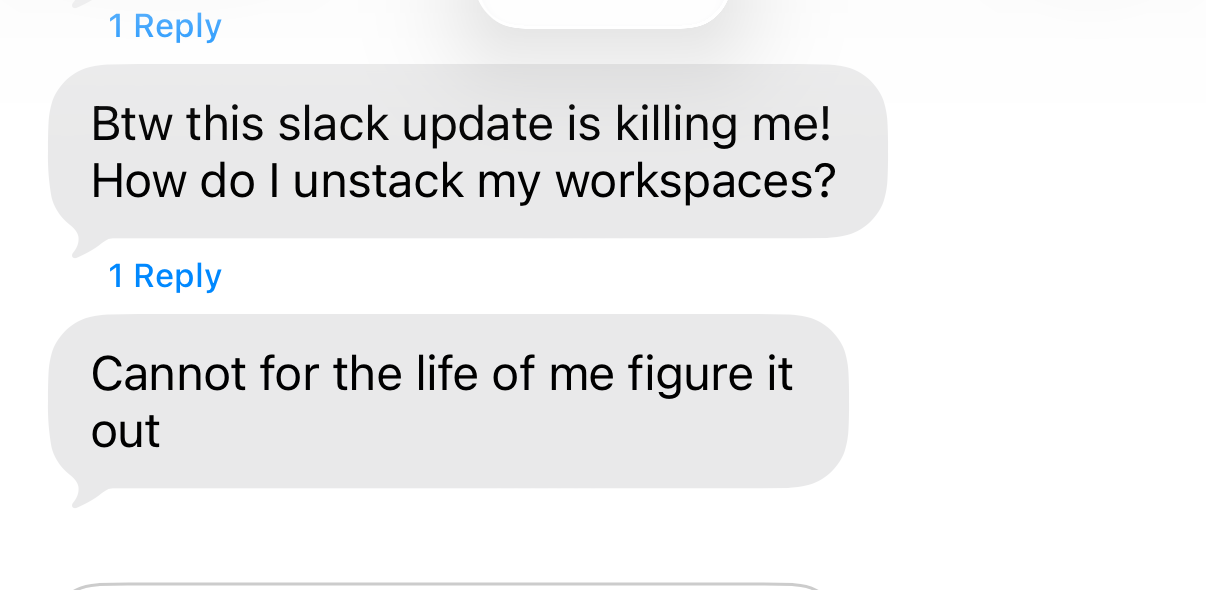

But to many of our customers, it was overkill. I remember getting a text from an old friend from college.

We’d made something great for big companies that heavily use Slack — ourselves. And that’s not a knock on Slack’s product team — Slack continues to attract the best product thinkers in the industry for a reason. Many companies lump engineering, product and design together. At Slack, we called it PDE: Product, Design, Engineering. Product was always the top priority. And it’s part of the reason it’s a household name today.

The point I’m trying to make is about blind spots.

During my last few months at the company — after nearly a month of prototyping — one “high priority” project I was tasked to lead halted abruptly when we realized the entry point for the designs had only been clicked by ~3k Slack users. We’d assumed wide adoption because the feature had been launched for months and was heavily used internally. It was a humbling moment for a high performing team.

To this day, it always makes me a little sad when I realize how many awesome features of Slack go undiscovered and unused.

Slack built its reputation on being customer and product obsessed. But at some point, our secret sauce — dogfooding — started to become less meaningful. The majority of Slack teams are not 100k organizations. The dogfood culture was so entrenched and unquestioned, we didn’t notice our roadmap had been optimizing for a handful of companies.

The same dynamic is playing out in AI right now. At a much higher velocity, with much higher stakes.

What happens when this bubble collides with $122 billion in new AI investment?

Just a few days ago, Open AI announced it’s raising $122 Billion “to accelerate the next phase of AI”. The success of these enormous capital raises hinge on a future world with broad, global population-wide AI adoption.

If you’re paying attention to AI, the stakes feel high right now. It’s everywhere. The implications for the future feel incomprehensible. Scary. Exciting. The more aware you become, the more anxious you get about the consequences of falling behind.

As tech people, we’re flying sidecar on a motorcycle with no windshield or goggles, holding on for our lives as AI accelerates at impossible speeds. It’s easy to forget that the rest of the world is still contently sauntering through the pre-AI status quo.

I don’t know about you, but this feels like a good moment to slow down for a second and think about the Peggy Robinson’s of the world.

We all have blindspots. The dangerous ones are the ones we’ve stopped looking for.

This week’s challenge: go out and find someone who’s never heard of Open AI. Let me know how it goes.

Til next week,

Carly

Switch BioWorks engineers naturally occurring microbes that produce fertilizer directly on plant roots—reducing costs, runoff, and supply chain risks by making fertilizer on the farm, where it’s needed.

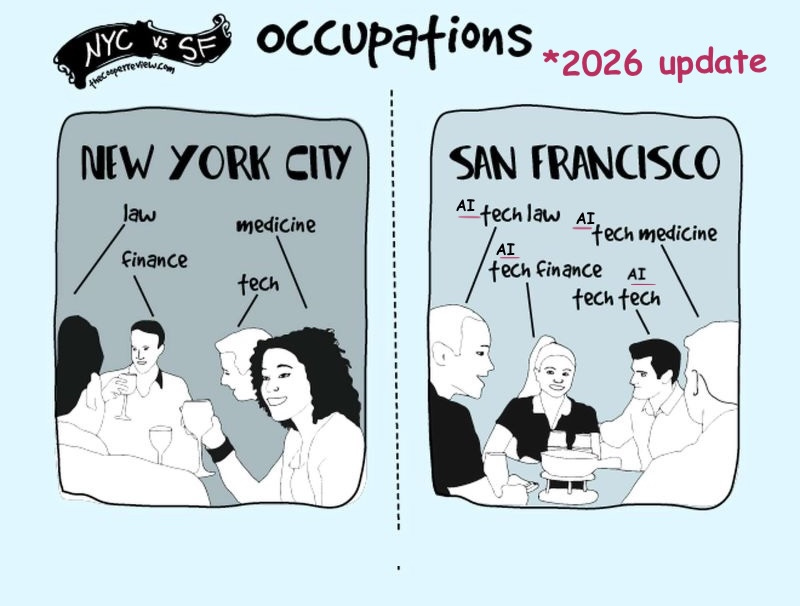

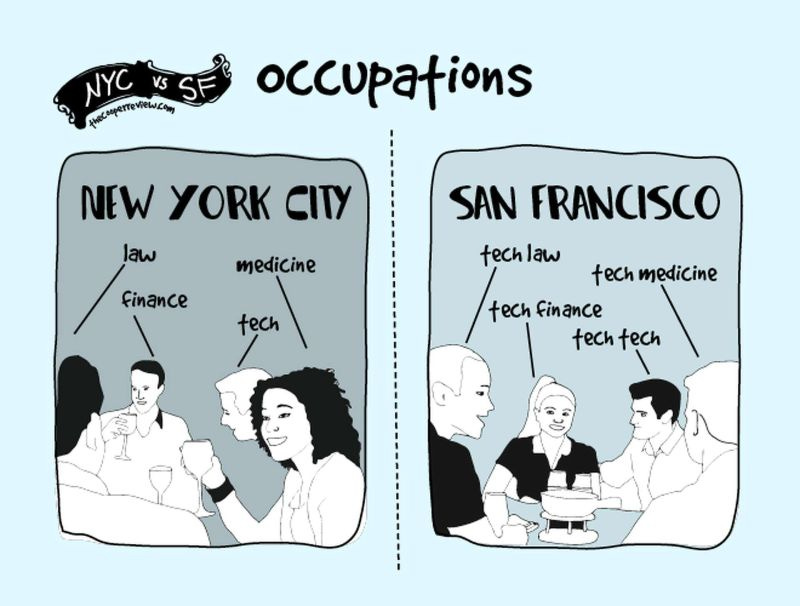

People in tech are used to hearing debates about “bubbles”. You can’t live in the San Francisco bay area and not encounter it. Restaurants, coffee shops, parents strolling their babies, run clubs, dinner parties — it doesn’t take long before someone is talking about AI and bubbles. San Francisco’s is unique in this way. I don’t think there is another city on earth where it’s genuinely hard to meet someone who doesn’t work in tech. Someone sent me this meme when I was first moving from NYC to San Francisco in 2015:

That meme turned out to be comically true. A more accurate depiction of San Francisco in 2026 would probably look more like this:

In tech, it’s common to refer to using your own product as "eating your own dog food”. The philosophy behind it is that by internal employees using the product they are building and selling, customers and the company benefit overall. You get an edge on your competitors by finding pain points faster, intuiting product solutions, and developing deep customer empathy that translates to increased company loyalty and profits. However, if the employees are using the product differently than the customers, this logic can break down and actually create blind spots and unnecessary UX complexity. “Dogfooding” is usually always a good thing, but it has to be balanced with real user data. When things are moving fast, it’s easy to default to only testing how your company tends to use the product internally.

**PS: If you read this blog when I first published it April 3, I made a few edits April 7 (clarifying my point about Slack and adding some Peggy Robinson highlights for you to enjoy) I’m still getting back into the swing of this whole writing process. Publishing weekly essays after not publishing anything for 10 years has been a whirlwind of learning. Thanks for reading and supporting this work in progress!

Well said, Carly! 👏 I think anyone working in SaaS right now (and I imagine especially on the west coast) can become bubble blind so easily. I am definitely guilty of it. The last SaaS I worked for and my current both serve highly regulated, compliance-driven industries. In both cases I can talk to one customer who tells me “this industry will never adopt AI” and then I go from that convo to another customer who says “show me your AI roadmap or I’m not renewing.” 🙃 It’s definitely a delicate balance of bringing certain customers along for the AI journey and making big bets that might risk leaving the “no nevers” behind. What I so easily forget when I get excited about innovation opportunities (and there are so many right now!!) is that I’m not any of our user personas by any stretch, so I have to find that sweet spot among our customers stated goals/preferences, their actual behavior, and our business goals. I find that constraint to be one that breeds some really transformative innovation. I just have to remember to stay within it.

So easy to have our blindspots where we are and think that all people talk about everywhere in the world is AI. It's not!

P.s. Congrats to your fiancee on the big offer!